Install PaletteAI on EKS

Use this guide to install PaletteAI on an Amazon EKS cluster. The deployment uses the hub-as-spoke pattern with Zot as the Open Container Initiative (OCI) registry.

Prerequisites

- Complete the Set Up EKS Environment guide. This guide creates the required PaletteAI resources on your hub and spoke clusters.

-

Use a Kubernetes cluster as the PaletteAI hub.

-

Access to the hub cluster using the built-in Kubernetes

cluster-adminClusterRole. -

Minimum Kubernetes versions

Cluster Type Kubernetes Version Hub >= 1.31.0 Spoke >= 1.31.0 -

Minimum resource requests

Cluster Type CPU Memory Storage Hub 3388m 2732 Mi 10Gi Spoke 1216m 972 Mi 10Gi -

Ensure the hub cluster can reach the public AWS Elastic Container Registry (ECR) that hosts the

muralandmural-crdscharts. -

Access to the hub cluster

kubeconfigfile. -

Install Flux controllers on the hub cluster if you plan to use the recommended Flux-managed workflow.

-

Install the following tools on the machine you use to install or upgrade PaletteAI:

-

Configure the hub cluster Kubernetes API server to trust Dex as an identity provider. PaletteAI deploys Dex as part of the installation. This requirement applies only to the hub cluster, not to spoke clusters. For details, refer to Configure Kubernetes API Server to Trust OpenID Connect (OIDC) Provider.

-

By default, PaletteAI is configured to terminate HTTPS at the load balancer. To enable this behavior you need:

-

A domain name available for PaletteAI.

-

A public certificate issued by a Certificate Authority (CA). In this guide, the expectation is that the certificate is issued by AWS Certificate Manager. Check out the Request a public certificate in AWS Certificate Manager guide if you need to create a new certificate.

infoPaletteAI communicates internally over HTTPS. Refer to our Security page for a detailed explanation of the security architecture.

-

Enablement

-

-

Download the latest Helm chart values file. This example uses

curl.curl --output values.yaml --silent https://docs.palette-ai.com/resources/assets/hosted/helm/values.yaml -

Open the Helm chart values file in a text editor of your choice and complete the following sections. This example uses

vi.vi values.yaml

Global

-

-

Use the

globalsection to configure overarching settings for the PaletteAI deployment. Review and modify the following values as necessary.-

Set

global.dns.domainto the primary domain for the deployment. Do not include a protocol. For example, useexample.org, nothttps://example.org.global:

dns:

domain: 'example.acme.org' -

In

global.auditLogging.basicAuth, change the defaultusernameandpasswordfor audit logging. These credentials secure the Alertmanager instance that receives audit events. You reuse them when you configure the Base64-encodedAuthorizationheader in thealertmanagersection.global:

auditLogging:

basicAuth:

username: '<your-username>'

password: '<your-password>'Refer to Audit Logging to learn more about configuring audit logging, querying audit events, and forwarding logs to long-term storage.

-

Configure the metrics collection settings. Provide an existing, external Prometheus server that is reachable from the hub cluster and every spoke cluster. Spoke clusters use Prometheus agents to ship metrics to the server via

remote_write.Set

global.metrics.prometheusBaseUrlto the external Prometheus server URL (for example,https://your-external-prometheus:9090). Include only the protocol, host, and port — do not include any API paths.global:

metrics:

prometheusBaseUrl: 'https://your-external-prometheus:9090'

timeout: '5s'

scrapeInterval: '15s'

agentType: 'prometheus-agent-minimal'

username: ''

password: ''By default,

global.metrics.agentTypeis set toprometheus-agent-minimal. The minimal agent configuration only collects spoke cluster CPU and GPU utilization metrics. You may changeglobal.metrics.agentTypetoprometheus-agentto ship all node-exporter and dcgm-exporter metrics from spoke clusters for comprehensive observability.If your Prometheus server requires basic authentication, configure the

usernameandpasswordfields. Leave these fields blank if authentication is not required.Refer to Configure Prometheus Agent Monitoring for guidance on agent types, Prometheus and Grafana prerequisites, and GPU metrics.

tipIf you need to set up a Prometheus server, you may find the Deploy Monitoring Stack guide helpful.

Set

global.kubernetesProvidertoEKS.global:

kubernetesProvider: EKSComplete

globalconfiguration section

FleetConfig

-

-

To configure an EKS FleetConfig, update the following parameters in the

fleetConfigsection of your Helm chart.Parameter Description source.bundleVersionThe source bundle for the ClusterManagerand allKlusterlets. Use a bundle version ofv0.16.1or later for EKS clusters.registrationAuth.driverThe driver used for authentication. Must be awsirsa.registrationAuth.hubClusterARNThe Amazon Role Name (ARN) of the hub cluster. registrationAuth.autoApprovedARNPatternsOptional list of spoke cluster name ARN patterns that the hub will auto-approve. hub.apiServerThe hub cluster's API server endpoint. This can be found in the hub kubeconfig file. spokes[i].clusterARNThe ARN of the spoke EKS cluster. spokes[i].klusterlet.forceInternalEndpointLookupDictates if the internal endpoint is looked up via the cluster-info ConfigMapinstead of the hub cluster's public API server endpoint.warningDo not change the

spokes[i].name: hub-as-spokevalue if using the hub-as-spoke pattern when installing PaletteAI.fleetConfig:

enabled: true

spokeFeatureGates:

ClusterClaim: true

RawFeedbackJsonString: true

source:

bundleVersion: v1.0.0

registry: quay.io/open-cluster-management

registrationAuth:

driver: "awsirsa"

hubClusterARN: "arn:aws:eks:<hub-region>:<hub-account-id>:cluster/<hub-cluster-name>"

autoApprovedARNPatterns: []

hub:

# The Hub clusters API Server. Required when configuring an EKS or GKE FleetConfig.

apiServer: "https://<cluster-id>.<eks-url-suffix>"

spokes:

- name: hub-as-spoke # do not edit this name if you are using the default hub-as-spoke mode

clusterARN: "arn:aws:eks:<spoke-region>:<spoke-account-id>:cluster/<spoke-cluster-name>"

klusterlet:

forceInternalEndpointLookup: false -

Update

fleetconfig-controller.serviceAccount.annotationsto reference the Amazon Resource Name (ARN) created for your hub cluster. Thehub-account-idis the AWS account ID under which your hub EKS cluster is deployed.fleetconfig-controller:

enabled: true

serviceAccount:

annotations:

eks.amazonaws.com/role-arn: arn:aws:iam::<hub-account-id>:role/FleetConfigHubIrsaThis is the minimum configuration required to install a hub-as-spoke

FleetConfig. If you are configuring dedicated spoke clusters, also complete the following steps for each spoke cluster.Hub with dedicated spoke clusters

- Create a copy of the spoke

kubeconfigand update itsexeccommand to pass the--roleflag with theFleetConfigJoinrole ARN.

users:

- name: user@spoke-cluster-1.us-west-2.eksctl.io

user:

exec:

apiVersion: client.authentication.k8s.io/v1beta1

args:

- token

- -i

- <spoke-cluster-name>

- --role

- arn:aws:iam::<spoke-account-id>:role/FleetConfigJoin

command: aws-iam-authenticator-

Upload the copied

kubeconfigto a Kubernetes Secret on the hub cluster.export KUBECONFIG=hub.kubeconfig

kubectl create secret generic spoke-kubeconfig-i --from-file=kubeconfig=spoke-i.kubeconfig --namespace <spoke-namespace> -

Update

fleetConfig.spokes[i].kubeconfigto reference thespoke-kubeconfig-iSecret you created in the previous step, and setkubeconfig.inCluster: false.fleetConfig:

spokes:

- kubeconfig:

# The context to use in the kubeconfig file. Leave empty to use the current context.

context: ''

# If set, the kubeconfig will be read from the cluster. Only applicable for same-cluster operations.

inCluster: false

# A reference to an existing secret containing a kubeconfig. Must be provided for remote clusters.

# For same-cluster, must be provided unless InCluster is set to true.

secretReference:

# The name of the secret.

name: 'spoke-kubeconfig-i'

# The map key to access the kubeconfig.

kubeconfigKey: 'kubeconfig' -

Rename

fleetConfig.spokes[i].namefromhub-as-spoketo the name you want to use.

Alertmanager

- Create a copy of the spoke

-

Navigate to the

alertmanagersection. Update credentials for thealertmanagerinstance based on the credentials you configured in theglobalsection.You must provide a Base64-encoded string for the

Authorizationheader. Use the interactive encoder to generate your Base64-encoded string and copy the value to the clipboard.Base64 Encoded String:Alternatively, generate the Base64-encoded string using the following command. Replace

usernameandpasswordwith the username and password you configured in theglobalsection.echo -n "username:password" | base64The following example shows the

livenessProbeandreadinessProbesections with the Base64-encoded string. Replace<your-base64-encoded-string>with the Base64-encoded string you generated.alertmanager:

livenessProbe:

httpGet:

path: /-/healthy

port: http

scheme: HTTPS

httpHeaders:

- name: Authorization

value: 'Basic <your-base64-encoded-string>'

readinessProbe:

httpGet:

path: /-/ready

port: http

scheme: HTTPS

httpHeaders:

- name: Authorization

value: 'Basic <your-base64-encoded-string>'Complete

alertmanagerconfiguration sectionFor further instructions on accessing audit logs and configuring long-term storage, refer to Audit Logging.

Canvas

-

Canvas controls the user interface. Review and modify the following values as needed.

-

To configure the ingress for Canvas, set

canvas.ingress.enabledtotrue. Setcanvas.ingress.domainto your domain name. Omit thehttp://orhttps://prefix.canvas:

ingress:

enabled: true

annotations: {}

ingressClassName: nginx

domain: replace.with.your.domain # No HTTP/HTTPS prefix.

matchAllHosts: false

tls: []

paths:

- path: /ai

pathType: ImplementationSpecific

backend:

service:

name: canvas

port:

number: 2999Set

canvas.enableHTTPtotrueto let the load balancer terminate Transport Layer Security (TLS). Leavecanvas.ingress.tlsempty.canvas:

enableHTTP: true -

The last portion of the Canvas configuration is the OIDC configuration. If you defer configuring OIDC for Dex, you may do the same for Canvas and configure it later.

In the

canvas.oidcsection, enter a unique string for thesessionSecret. ForredirectURL, replace<your-domain>with your domain. Do not remove the/ai/callbackpath.canvas:

oidc:

sessionSecret: '<your-session-secret>'

sessionDir: '/app/sessions'

issuerK8sService: 'https://dex.mural-system.svc.cluster.local:5554/dex'

skipSSLCertificateVerification: true

redirectURL: 'https://<your-domain>/ai/callback'If you did not configure your Kubernetes cluster to trust Dex as an OIDC provider, then you must configure the

canvas.impersonationProxysection to enable user impersonation.The example below shows how to configure the local Dex user

admin@example.comto be mapped to an example Kubernetes groupadmin. Refer to our Configure User Impersonation guide to learn more about how to configure user impersonation for OIDC groups and other use cases.Example user impersonation setupcanvas:

impersonationProxy:

enabled: true

userMode: 'passthrough'

groupsMode: 'map'

userMap: {}

groupMap: {}

dexGroupMap:

'admin@example.com': [ 'admin' ]Complete

canvasconfiguration section

Dex

-

-

Dex authenticates users to PaletteAI through SSO. You can configure Dex to connect to an upstream OIDC provider or to a local user database. For this installation, you will configure Dex to connect to an upstream OIDC provider. If you want to configure an OIDC provider later, you can do so; however, Dex still requires some basic configuration.

-

Set

dex.config.issuerto your domain. Do not remove the/dexpath.dex:

config:

issuer: 'https://<your-domain>/dex' -

This next part may be deferred for later, but we strongly recommend configuring at least one connector. Set the

dex.config.connectorsto the connectors you want to use. The Dex documentation has examples for each of the connectors.Below is an example of an OIDC connector that connects to AWS Cognito. The

oidctype can be used for any OIDC provider that does not have a native Dex connector. Different OIDC providers may require different configurations.Example AWS Cognito configurationdex:

config:

connectors:

- type: oidc

id: aws

name: AWS Cognito

config:

issuer: https://cognito-idp.us-east-1.amazonaws.com/us-east-1_xxxxxx

clientID: xxxxxxxxxxxxxxx

clientSecret: xxxxxxxxxxxxxxxxx

redirectURI: https://<your-domain>/dex/callback # Dex callback URL for the authorization code flow; redirects to the application callback URL

getUserInfo: true

userNameKey: email

insecureSkipEmailVerified: true

insecureEnableGroups: true

scopes:

- openid

- email

- profile

promptType: consent

claimMapping:

groups: groups -

Proceed to the

dex.config.staticClientssection. Replace<your-client-secret>with a unique secret value and<your-domain>with your domain. Do not remove the/ai/callbackpath for themuralclient.dex:

config:

staticClients:

- id: mural

redirectURIs:

- 'https://<your-domain>/ai/callback'

name: 'mural'

secret: '<your-client-secret>'

public: false

trustedPeers:

- kubernetes

- id: kubernetes

redirectURIs:

- 'https://<your-domain>'

name: kubernetes

secret: '<your-client-secret>'

public: false

trustedPeers:

- mural -

Next, configure the

dex.config.staticPasswordssection. We strongly recommend changing the default user (admin) and password (password) to strong values. The following example is the default user and password in bcrypt format. Remember to use a bcrypt hash generator to generate the password hash. TheuserIDcan be any unique string.warningIf you did not configure any OIDC connectors, you must configure at least one static user, which is used to access the PaletteAI UI. Static Dex users automatically inherit admin privileges through the service account. Dex does not support groups for local static users. To use groups for local static users, you must use the User Impersonation feature.

dex:

config:

staticPasswords:

- email: 'admin@example.com'

hash: '$2a$12$Ot2dJ0pmdIC2oXUDW/Ez1OIfhkSzLZIbsumsxkByuU3CUr02DtiC.'

username: 'admin'

userID: '08a8684b-db88-4b73-90a9-3cd1661f5466' -

Configure the

dex.ingresssection to expose Dex. Forhost, replace<your-domain>with your domain. Do not change theclassNameor thepath. Because Transport Layer Security (TLS) is terminated at the load balancer, thetlssection is empty.dex:

ingress:

enabled: true

className: 'nginx'

annotations: {}

hosts:

- host: <your-domain>

paths:

- path: /dex

pathType: ImplementationSpecific

tls: []Complete

dexconfiguration section

Flux2

-

-

Set

flux2.policies.createtofalseto disable the Flux network policies. These policies, if enabled, prevent ingress traffic from reaching their target services.flux2:

policies:

create: falseinfoThis step is not required if the hub and all spoke clusters are configured to use a common, external OCI registry. An external OCI registry is configured in the

fleetConfig.spokes[*].ociRegistryandhue.ociRegistrysections of thevalues.yamlfile.Complete

flux2configuration sectionIngress-Nginx

-

Use the

ingress-nginx.controller.service.annotationssection to add the SSL certificate to the ingress controller service. Replace<your-ssl-certificate-arn>with the Amazon Resource Name (ARN) of the SSL certificate you have in AWS Certificate Manager. Add the remaining annotations to terminate Transport Layer Security (TLS) at the load balancer and enforce HTTPS through the load balancer.We recommend using at least

ELBSecurityPolicy-TLS-1-2-2017-01to enforce TLS 1.2. Review the Security policies for your Application Load Balancer guide for more information about the available policies.ingress-nginx:

controller:

service:

annotations:

service.beta.kubernetes.io/aws-load-balancer-ssl-cert: <your-ssl-certificate-arn>

service.beta.kubernetes.io/aws-load-balancer-ssl-ports: 'https'

service.beta.kubernetes.io/aws-load-balancer-ssl-negotiation-policy: "ELBSecurityPolicy-TLS-1-2-2017-01"

nginx.ingress.kubernetes.io/force-ssl-redirect: 'true'

service.beta.kubernetes.io/aws-load-balancer-backend-protocol: 'http'Complete

ingress-nginxconfiguration sectionZot

infoIf you prefer Amazon Elastic Container Registry (ECR) instead of the default in-cluster Zot registry, refer to Configure ECR Instead of Zot. You can also switch from Zot to ECR after installation.

-

Set

zot.ingress.enabledtotrueand add the following annotations so the ingress routes traffic to Zot.zot:

ingress:

enabled: true

annotations:

nginx.ingress.kubernetes.io/use-regex: "true"

nginx.ingress.kubernetes.io/rewrite-target: /$1Set

zot.ingress.hosts[i].hostto the domain you set in theglobal.dns.domainparameter.zot:

ingress:

hosts:

- host: my.domain.com

paths:

- path: /zot/(.*)Set

zot.httpGet.schemetoHTTPso the HTTP probe succeeds.zot:

httpGet:

scheme: HTTPTogether, these configurations allow you to use a single load balancer instead of a second load balancer dedicated to Zot.

The resulting Ingress resources produce two redirect paths:

-

Requests for

my.domain.com/zot/*are sent to thezotservice endpoint with the/zotportion of the path removed. -

Requests for

my.domain.com/v2andmy.domain.com/v2/*are also sent to thezotservice endpoint. Therefore, Zot ownsmy.domain.com/v2. No other service can expect to serve traffic through that route.

Modify the

zot.configFiles.config.jsonsection to remove thetlssection. Transport Layer Security (TLS) terminates at the load balancer, so you do not need thetlssection.zot:

configFiles:

config.json: |-

{

"storage": { "rootDirectory": "/var/lib/registry" },

"http": { "address": "0.0.0.0", "port": "5000","auth": { "failDelay": 5, "htpasswd": { "path": "/secret/htpasswd" } } },

"extensions": {"search": {"enable": true}, "ui": {"enable": false}},

"log": { "level": "debug" }

}To add additional users, add them to the

htpasswdsection. Use thehtpasswdutility or a similar tool that can generate a bcrypt hash.zot:

secretFiles:

htpasswd: |-

admin:$2y$05$vmiurPmJvHylk78HHFWuruFFVePlit9rZWGA/FbZfTEmNRneGJtha

user:$2y$05$L86zqQDfH5y445dcMlwu6uHv.oXFgT6AiJCwpv3ehr7idc0rI3S2GIf this configuration does not work in your environment, you may set

zot.service.type: LoadBalancerandzot.ingress.enabled: false. This requires an additional load balancer and an additional DNS A or CNAME record. You can configure the DNS record after the Helm installation. However, you must pre-configure the following fields to use the correct DNS name:-

fleetConfig.spokes[i].ociRegistry.endpoint -

fleetConfig.spokeValuesOverrides.hue.ociRegistry.endpoint -

hue.ociRegistry.endpoint

tipIf you use a dedicated load balancer for Zot and terminate TLS inside your cluster, the endpoint must include a

:5000suffix when provided to other services that need the registry endpoint (e.g.,oci://zot.my.domain.com:5000).Complete

zotconfiguration sectionInstall with Flux

Install PaletteAI with Flux to let Flux manage chart ordering and the Custom Resource Definition (CRD) lifecycle for both Helm charts.

-

Create

mural-crds-oci-repository.yamlfor themural-crdschart.cat << EOF > mural-crds-oci-repository.yaml

apiVersion: source.toolkit.fluxcd.io/v1

kind: OCIRepository

metadata:

name: mural-crds

namespace: mural-system

spec:

interval: 10m

ref:

semver: "0.7.0-hotfix.4"

url: oci://public.ecr.aws/mural/mural-crds

EOF -

Create

mural-oci-repository.yamlfor themuralchart.cat << EOF > mural-oci-repository.yaml

apiVersion: source.toolkit.fluxcd.io/v1

kind: OCIRepository

metadata:

name: mural

namespace: mural-system

spec:

interval: 10m

ref:

semver: "1.0.7"

url: oci://public.ecr.aws/mural/mural

EOF -

Apply both

OCIRepositoryresources to your cluster.kubectl apply --filename mural-crds-oci-repository.yaml

kubectl apply --filename mural-oci-repository.yaml -

Create

mural-crds-helm-release.yamlfor themural-crdschart.cat <<'EOF' > mural-crds-helm-release.yaml

apiVersion: helm.toolkit.fluxcd.io/v2

kind: HelmRelease

metadata:

name: mural-crds

namespace: mural-system

spec:

interval: 10m

chartRef:

kind: OCIRepository

name: mural-crds

namespace: mural-system

install:

crds: Create

upgrade:

crds: CreateReplace

EOF -

Create

mural-helm-release.yamlfor themuralchart. ThedependsOnfield ensures that Flux installsmural-crdsbeforemural.cat <<'EOF' > mural-helm-release.yaml

apiVersion: helm.toolkit.fluxcd.io/v2

kind: HelmRelease

metadata:

name: mural

namespace: mural-system

spec:

interval: 10m

chartRef:

kind: OCIRepository

name: mural

namespace: mural-system

dependsOn:

- name: mural-crds

values:

# Paste the contents of your values.yaml file here.

EOF -

Open

mural-helm-release.yamland replace the placeholder comment underspec.valueswith the contents of thevalues.yamlfile for your environment. Keep the inserted YAML indented underspec.values. -

Apply both

HelmReleaseresources to your cluster.kubectl apply --filename mural-crds-helm-release.yaml

kubectl apply --filename mural-helm-release.yaml

Install with Helm

warningIf you do not use Flux, manage the

mural-crdschart separately from themuralchart. Apply or upgrade Custom Resource Definitions (CRDs) out of band before you install or upgrade themuralchart. For the manual Helm workflow, refer to Upgrade Manually.-

Install the

mural-crdsHelm chart first.helm install mural-crds oci://public.ecr.aws/mural/mural-crds --version 0.7.0-hotfix.4 \

--namespace mural-system --create-namespace --waitExample OutputNAME: mural-crds

LAST DEPLOYED: Tue May 27 09:34:33 2025

NAMESPACE: mural-system

STATUS: deployed

REVISION: 1 -

Install PaletteAI from the

muralchart by using your environment'svalues.yamlfile.helm install mural oci://public.ecr.aws/mural/mural --version 1.0.7 \

--namespace mural-system --create-namespace --values values.yaml --waitExample OutputNAME: mural

LAST DEPLOYED: Tue May 27 09:39:48 2025

NAMESPACE: mural-system

STATUS: deployed

REVISION: 1

Configure DNS

-

-

Once PaletteAI is deployed, fetch the

EXTERNAL-IPof the load balancer deployed byingress-nginx-controller.kubectl get service ingress-nginx-controller --namespace mural-systemExample outputNAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-nginx-controller LoadBalancer 10.104.129.101 a9d221d65b2fd41b3929574458e8ce05-1177779699.us-east-1.elb.amazonaws.com 80:31952/TCP,443:30926/TCP 41m -

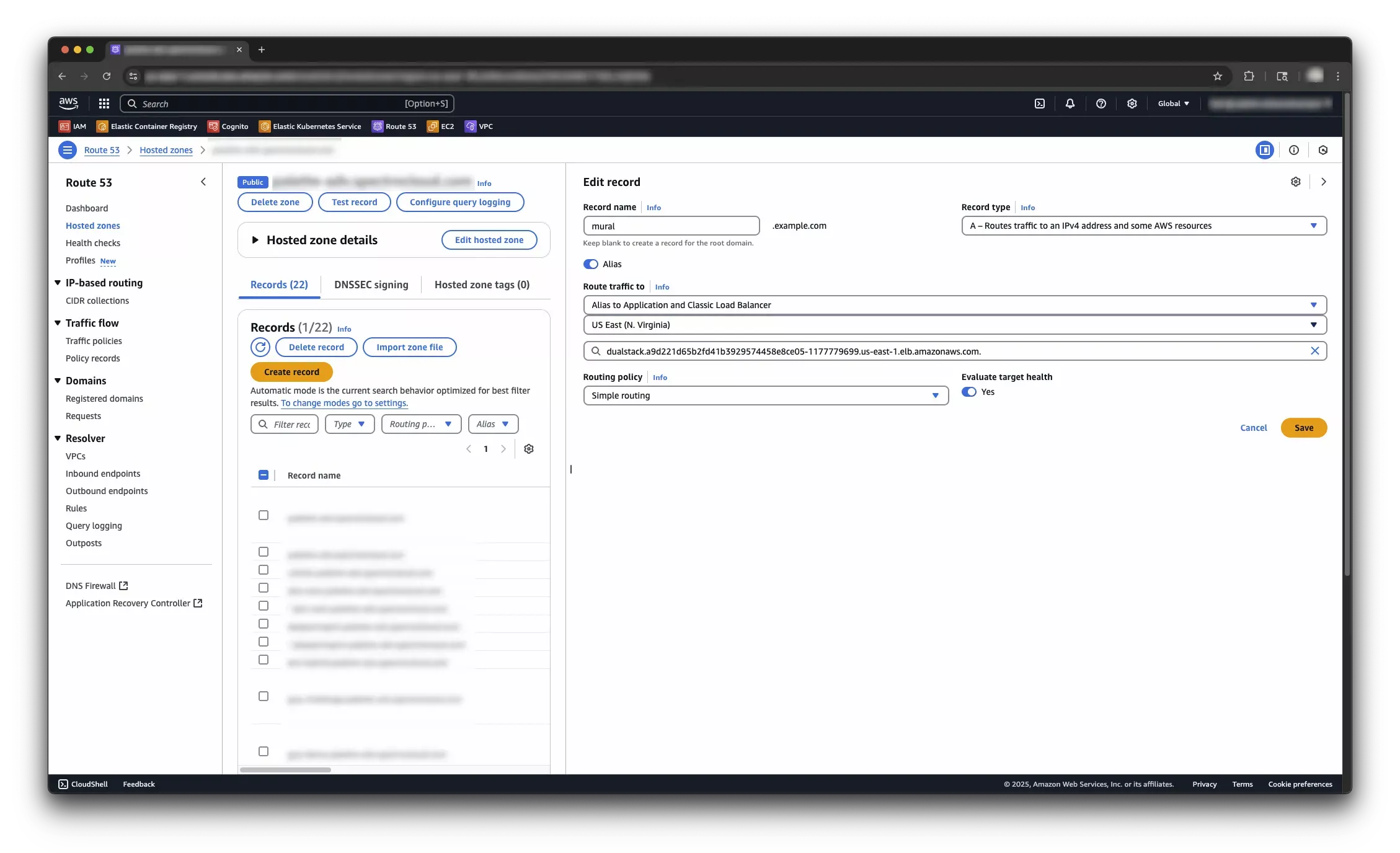

Create a DNS record for the

EXTERNAL-IPload balancer. If you are using Route 53, create an A record in your domain's hosted zone and select the load balancer as the target. Check out the Configure Amazon Route 53 alias record for your target DNS guide for more information. If you are using a different DNS provider, check out the documentation for your DNS provider to create an alias record for the load balancer URL. info

infoIt may take a few minutes for the DNS changes to take effect.

You have now deployed PaletteAI on Amazon EKS. If you are using user impersonation or configured an OIDC provider, you can now log in to PaletteAI. You can also use the default Dex local user.

If you need to update PaletteAI later, review Helm Chart Configuration and then follow the Upgrade PaletteAI guide. For Flux-managed installations, update the mural HelmRelease or the relevant OCIRepository resource and let Flux reconcile the change. For manual Helm installations, follow the Upgrade Manually guide and apply mural-crds out of band before mural.

Validate

Take the following steps to verify that PaletteAI is deployed and configured correctly.

-

Open a browser and navigate to the domain URL you configured for PaletteAI.

-

Log in with the default username and password. If you configured Dex with an OIDC connector, log in with your identity provider.

Next Steps

Once you have installed PaletteAI, integrate Palette with PaletteAI by configuring the Settings resource. This resource requires a Palette tenant, project, and API key so PaletteAI can communicate with Palette and deploy AI/ML applications and models to the correct location.

Proceed to the Integrate with Palette guide to learn how to prepare your Palette environment.